A proposed class action suit claims that Apple is hiding behind claims of privacy in order to avoid stopping the storage of child sexual abuse material on iCloud, and alleged grooming over iMessage, reports AppleInsider.

The suit was filed with the US District Court for the Northern District of California. From the court filing: Despite Apple’s abundant resources, it opts not to adopt industry standards for CSAM [Child Sexual Abuse Material] detection and instead shifts the burden and cost of creating a safe user experience to children and their families, as evidenced by its decision to completely abandon its CSAM detection tool. Apple’s privacy policy states that it would collect users’ private information to prevent things like CSAM, but it fails to do so in practice — a failure that it already knew of.

Apple’s incredulous rhetoric hedges one notion of privacy against another: Apple creates the false narrative that by detecting and deterring CSAM, it would run the risk of creating a surveillance regime that violates other users’ privacy. By framing privacy and safety as a zero sum game, Apple made choices to overlook safety, even though other tech companies have deployed technology to disrupt the distribution of CSAM.

But children’s safety should not be lost on Apple’s notion of privacy: comprehensive privacy should encompass safety for all consumers. In fact, in both online and offline activities, our society frequently harmonizes privacy and safety, especially in contexts where children and minors are involved. Various industries and fields strike a balance between reasonable expectations of privacy and reasonable expectations of safety showing a government ID when purchasing alcohol at a restaurant; granting parents and guardians access to a minor’s medical records; requiring teenage drivers to obtain a learner’s permit before a driver’s license — the tech industry is no exception, particularly as it relates to CSAM.

CSAM is the result of a cycle of production, distribution, and possession. All three of these stages are illegal at the state and federal level. Adult film production companies are required by law to have a Custodian of Records that documents and holds records of the ages of all performers. Online platforms ranging from email services to video sharing websites can be fined for knowingly hosting or facilitating the communication of CSAM.

Storage facilities can be found liable if they rent storage spaces to individuals who use them to commit crimes or store illegal items. The tech industry cannot claim a blanket exemption from these duties.

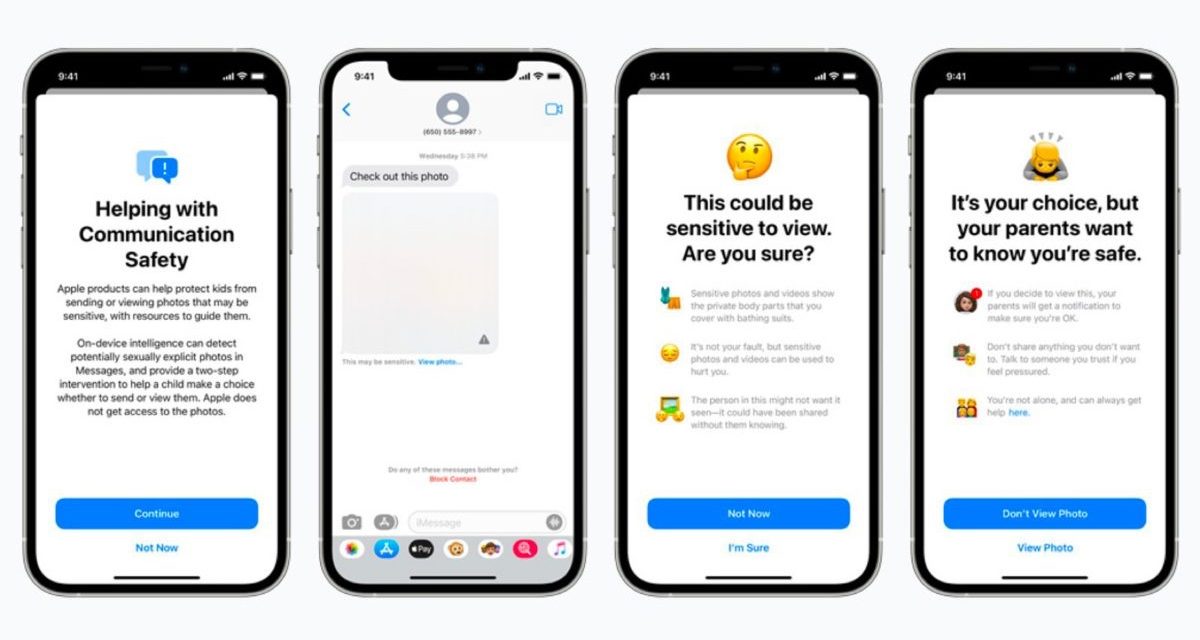

On August 6, 2021, Apple previewed new child safety features coming to its various devices that were due to arrive later this year. And the announcement stirred up lots of controversy. Here was the gist of Apple’s announcement: Apple is introducing new child safety features in three areas, developed in collaboration with child safety experts. First, new communication tools will enable parents to play a more informed role in helping their children navigate communication online. The Messages app will use on-device machine learning to warn about sensitive content, while keeping private communications unreadable by Apple.

Next, iOS and iPadOS will use new applications of cryptography to help limit the spread of CSAM online, while designing for user privacy. CSAM detection will help Apple provide valuable information to law enforcement on collections of CSAM in iCloud Photos.

Finally, updates to Siri and Search provide parents and children expanded information and help if they encounter unsafe situations. Siri and Search will also intervene when users try to search for CSAM-related topics.

However, in a September 3 statement to 9to5Mac, Apple said it was delaying its controversial CSAM detection system and child safety features.

Here’s Apple’s statement: Last month we announced plans for features intended to help protect children from predators who use communication tools to recruit and exploit them, and limit the spread of Child Sexual Abuse Material. Based on feedback from customers, advocacy groups, researchers and others, we have decided to take additional time over the coming months to collect input and make improvements before releasing these critically important child safety features.

I hope you’ll help support Apple World Today by becoming a patron. All our income is from Patreon support and sponsored posts. Patreon pricing ranges from $2 to $10 a month. Thanks in advance for your support.

Article provided with permission from AppleWorld.Today